Embry-Riddle Aeronautical University

eHMI conceptual design

Across the globe, an estimated 14 million semi-autonomous vehicles were on the road in 2020. But how do vehicles that require little input by the driver navigate human operator tasks such as determining if the vehicle or pedestrians crosses first at an intersection? For this problem, we designed a new external human-machine interface that incorporates visual and audio cueing to communicate between human and computer.

Affiliation:

Embry-Riddle Aeronautical University

Deliverables:

Academic report paper, digital sketches, oral defense presentation

Project period:

6 weeks

Team members:

5

All design sketches courtesy of Michael C. Falanga.

The challenge

Level 2 autonomous vehicles do not currently have a streamlined communication system in place to communicate to other road users, including other drivers, pedestrians, and construction workers. This meant that it was necessary for us to analyze the human factors and kinesiology of the existing communication system between drivers and road users. Based on our findings, we designed a system that mimicked these natural processes and adapted them for a computer system.

The solution

Our conceptual design incorporates a cohesive system of three AI vision cameras, a combination LED message board and projector housing mounted on the grille, speakers mounted on the A-pillars and front grille, and an adaptive tone signaling component.

Artificial Intelligence vision cameras

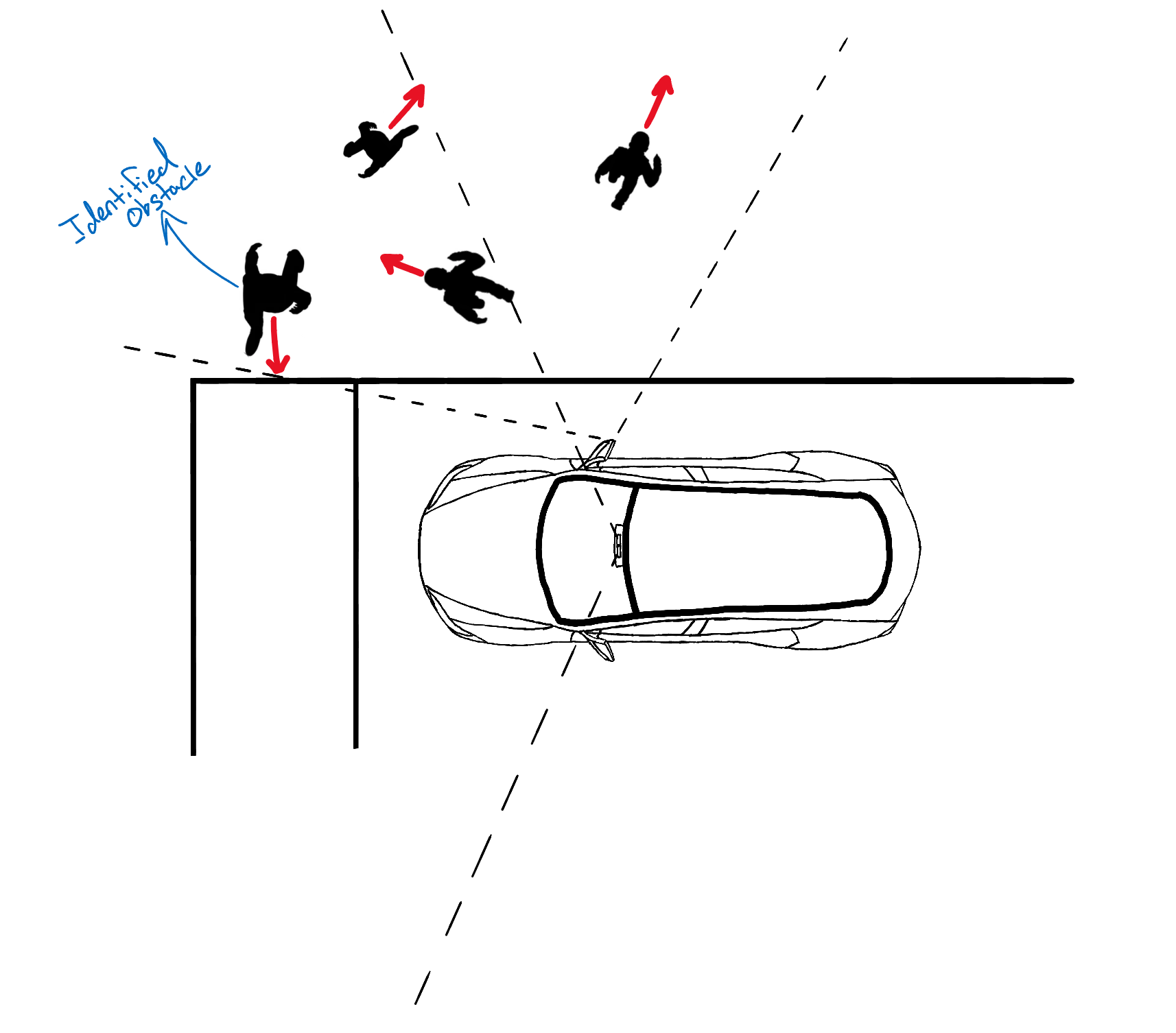

The cameras will work in conjunction with onboard maps to identify an intersection. Several algorithms have proven reliable in visually detecting intersections, but the inclusion of onboard maps to crosscheck the information acquired is a redundancy. Once the vehicle has stopped at the intersection, each camera will visually scan for pedestrians.

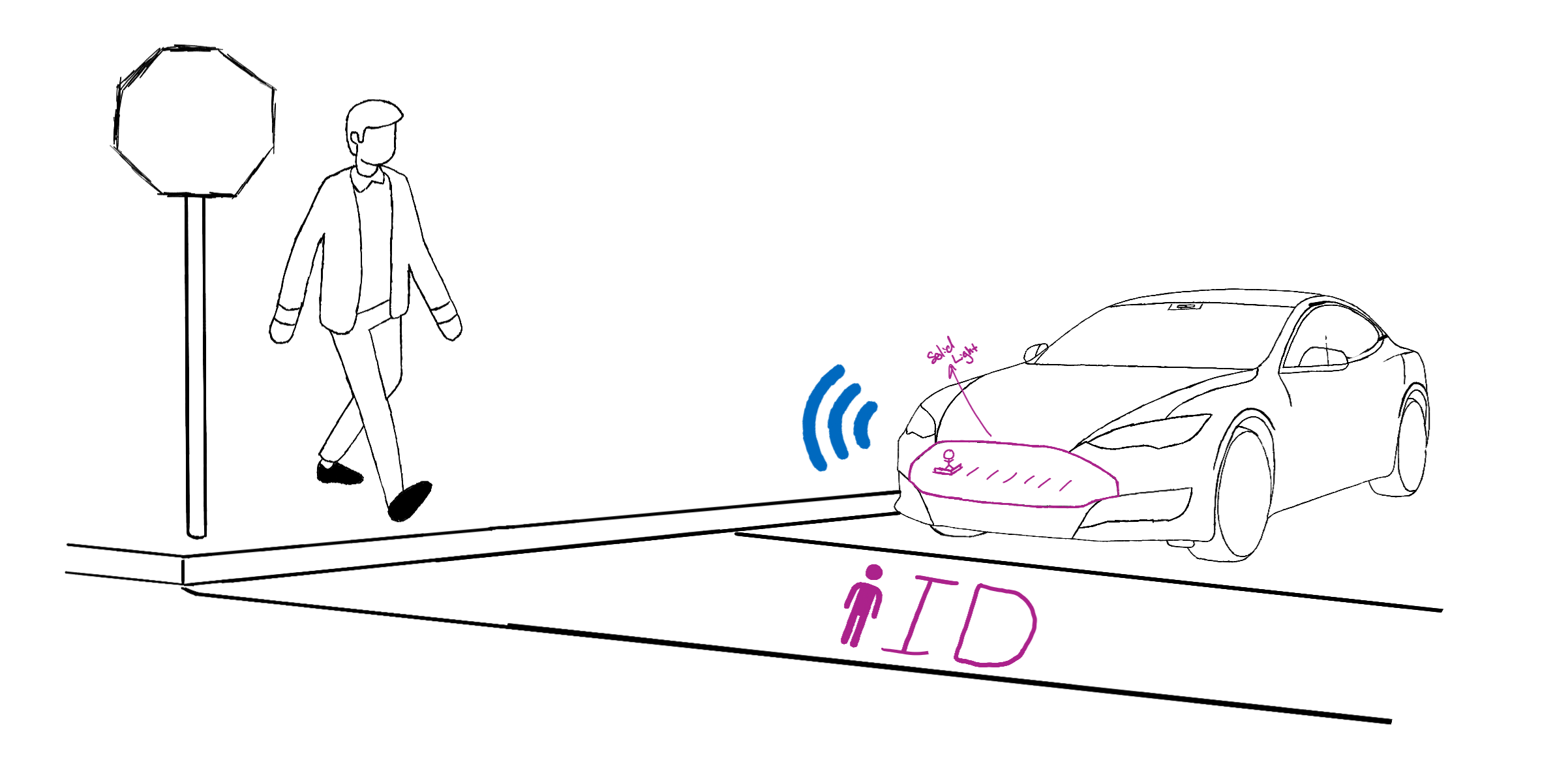

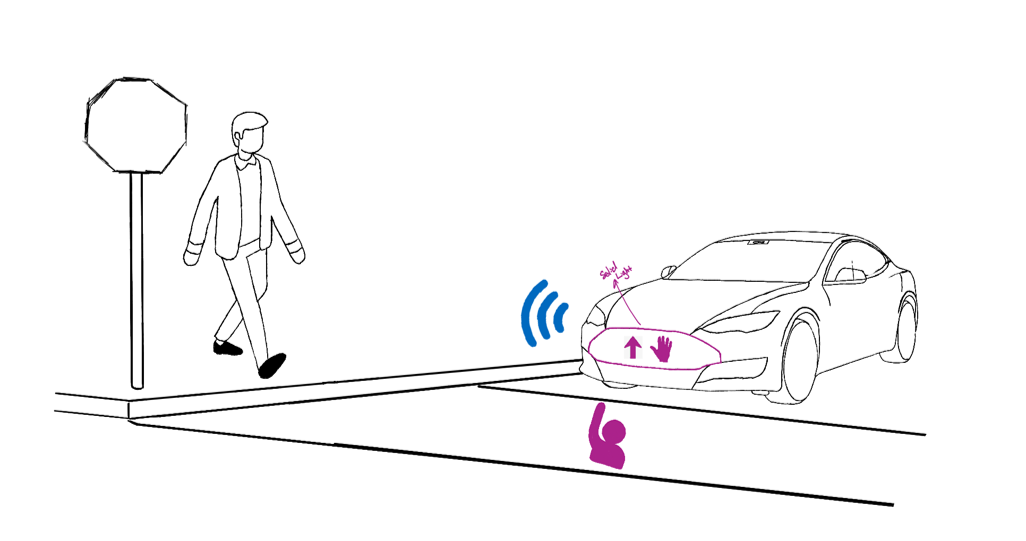

LED message board & projector

Our system will utilize the pedestrian first approach: the vehicle will always defer to the pedestrian, allowing them to cross first. To convey this information, our message board will display an animated pedestrian walking across a digital crosswalk. Simple words like “cross,” “stop,” or “wait” could be used either to replace or in conjunction with the animated graphics.

Speakers

To complement the visual components — and to include all segments of the population — auditory components will supplement the other design features. The design utilizes speakers mounted in both A-pillars and the front grille that will augment visual statements with verbal cues.

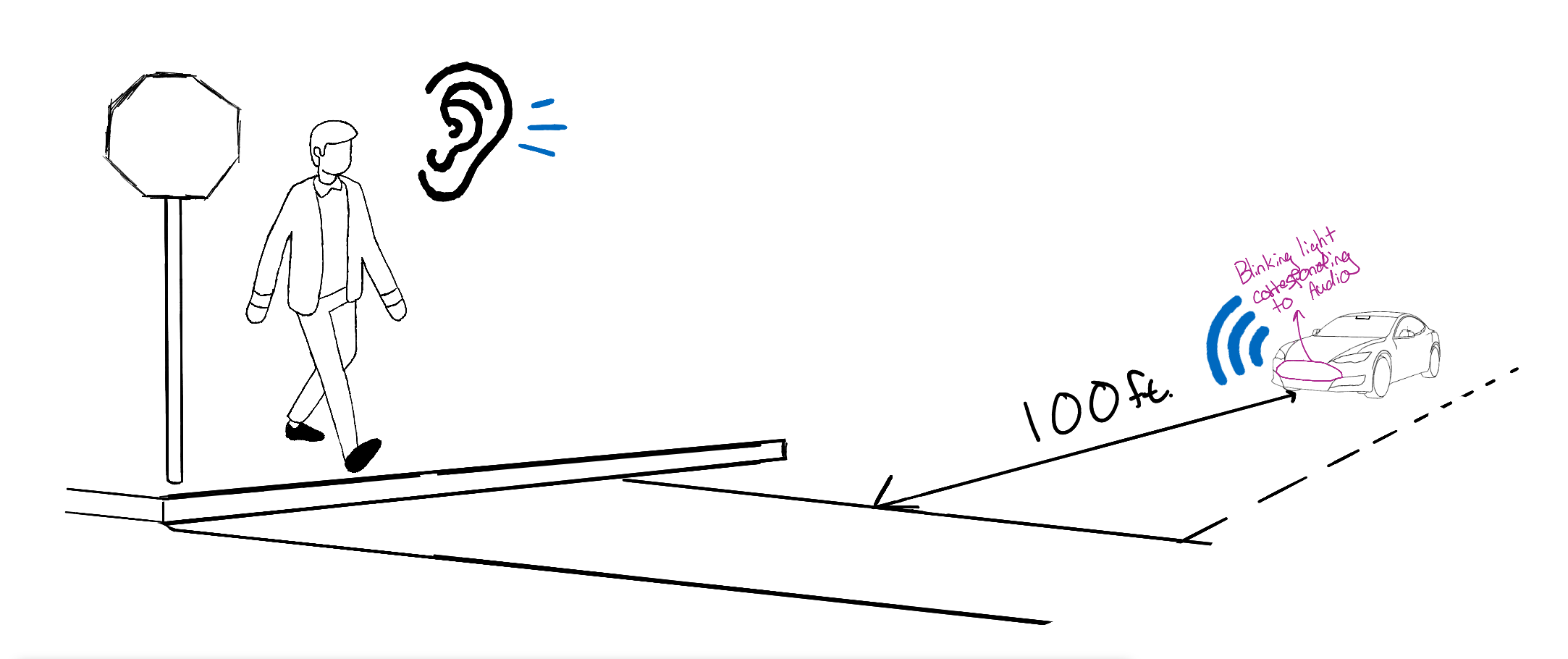

Adaptive Tone Signaling

As the vehicle approaches an intersection, the speakers will emit quick bursts of beeps, increasing in speed as the vehicle slows down. A quick, but not panic–inducing pattern will ensure that the visually–impaired population can recognize vehicle presence. Once the AV comes to a complete stop, the tone signaling will cease, allowing the computer voice to guide the pedestrian on how to proceed.

Want to read more?

Have questions or projects?

SEE THE NEXT CASE STUDY:

A Fisherman's Life project website